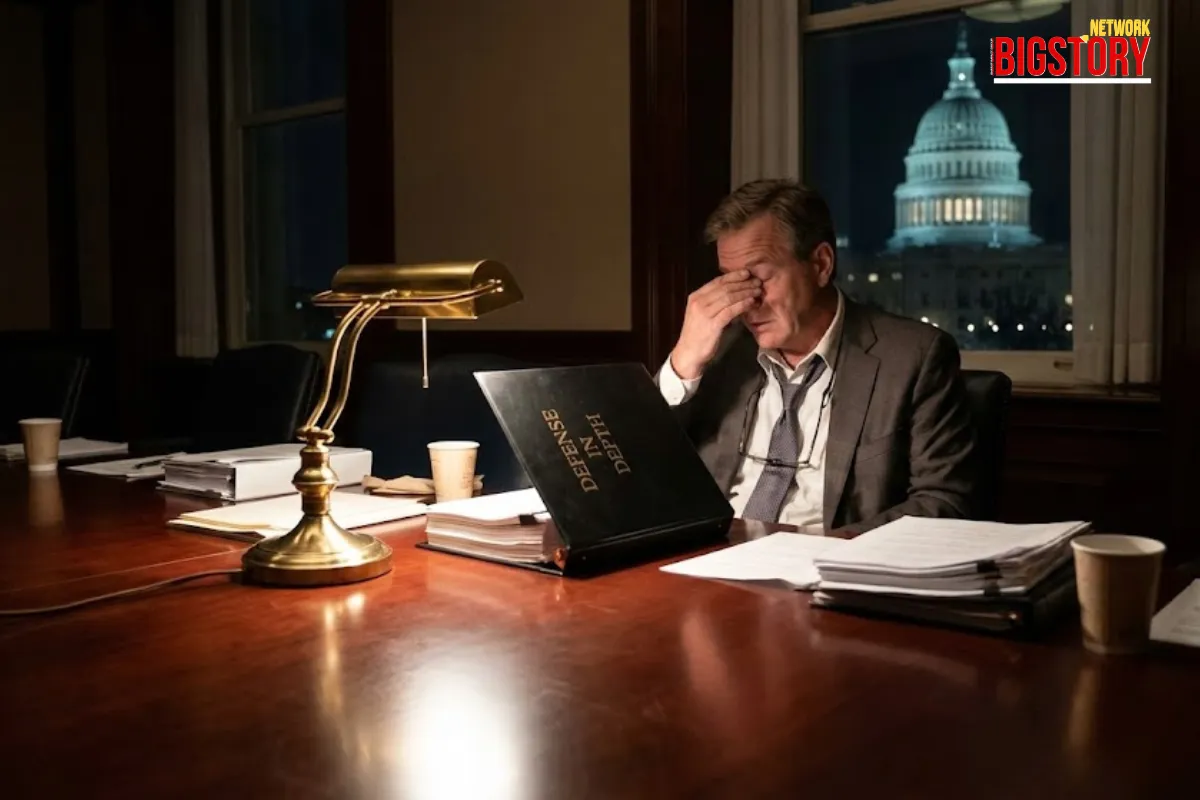

Washington is officially treating Artificial Intelligence not just as a software innovation, but as a potential weapon of mass destruction. Following a year-long investigation involving over 200 stakeholders from top AI labs and the intelligence community, Gladstone AI has delivered its "Defense in Depth" Action Plan to the US State Department. The report does not mince words: if left unregulated, the trajectory of advanced AI and AGI development could introduce catastrophic, extinction-level threats to the human species.

This matters because the report bridges the historical gap between Silicon Valley optimism and Pentagon paranoia. With major frontier labs publicly projecting that human-level AGI could be reached by 2028, the US government is actively laying the groundwork for unprecedented interventions. These include outlawing the training of AI models above certain compute thresholds and establishing a dedicated federal AI regulatory agency to monitor the global hardware supply chain.

The "BigStory" Angle (The "Weaponization Theft" Gap)

Mainstream media outlets have predictably focused on the "Terminator" narrative—the fear of a rogue, sentient AI deciding to wipe out humanity. While "Loss of Control" is one part of the assessment, the media is entirely missing the report's most urgent near-term warning: The "Weaponization Theft" Gap.

The report explicitly warns that the cybersecurity at major frontier labs is currently inadequate to protect against state-sponsored espionage. If a US-based lab develops a highly advanced, potentially dangerous model, and its core architecture (the model weights) is stolen by an adversarial nation or non-state actor, it effectively becomes an un-recallable "digital WMD." The weaponization of AI to launch autonomous cyberattacks or engineer biological pathogens does not require the AI to be sentient; it only requires the AI to fall into the wrong hands.

The Context (Rapid Fire)

- The Trigger: In May 2023, hundreds of AI researchers—including OpenAI CEO Sam Altman—signed a stark one-sentence warning prioritizing AI extinction risk alongside pandemics and nuclear war, prompting the government to seek actionable regulatory frameworks.

- The Backstory: President Biden’s October 2023 Executive Order established the first roadmap for AI safety, but the Gladstone report pushes further, calling for a hard cap on compute power to actively moderate the competitive race dynamics between tech giants.

- The Escalation: Moving into 2025 and 2026, the focus has shifted internationally with the rollout of the "AI Safety Institute International Network," an attempt to align the US, UK, and EU under a multilateral treaty regime to prevent "rogue" development havens.

Key Players (The Chessboard)

- Jeremie Harris (The Author): The CEO of Gladstone AI and primary author of the report. He emphasizes that the catastrophic risks are not theoretical, stating that a significant body of data suggests AI could lead to WMD-scale effects if not properly managed.

- Mark Beall (The Advocate): Co-author and former Pentagon official who views the Action Plan as a technically grounded roadmap to stabilize development, bridging the gap between national security logic and Silicon Valley innovation.

- Sam Altman (The Developer): CEO of OpenAI and head of a leading "frontier lab." His acknowledgement of existential risk forms a critical paradox: the companies driving the rapid acceleration of the technology are the same ones pleading for government guardrails.

The Implications (Your Wallet & World)

- Short Term (Data Security): If you work in tech, cloud architecture, or data security, review the "Model Weight Security" guidelines outlined in the report. The government is moving toward treating leaked model weights as permanent, severe national security breaches, which will drastically alter liability and compliance standards for developers.

- Long Term (Regulatory Capture): A deep cynicism is taking root in the open-source community. Critics argue that the "extinction-level threat" narrative is being utilized by tech monopolies to enforce regulatory capture—creating rules so expensive and complex that only the current multi-billion-dollar incumbents can afford to build frontier AI.

The Closing Question

The Gladstone report suggests that preventing an AI apocalypse requires strict government control over the hardware and compute power used to train models. Do you believe this will make humanity safer, or will it simply hand total control of our technological future to the government and a few massive corporations? Tell us in the comments.

FAQs

- Q: What is the 2024 Gladstone AI report commissioned by the State Department?

- A: It is a comprehensive assessment titled "Defense in Depth: An Action Plan to Increase the Safety and Security of Advanced AI," which warns of catastrophic national security risks from advanced AI and provides a roadmap for government regulation.

- Q: Is AI really an extinction-level threat according to the US government?

- A: While the US government has not officially adopted the stance, the State Department-commissioned Gladstone report explicitly states that unregulated advanced AI could pose an "extinction-level threat" due to weaponization and potential loss of control.

- Q: What are the 5 lines of effort in the AI Safety Action Plan?

- A: The report recommends a "Defense in Depth" strategy utilizing five lines of effort: establishing interim safeguards (like export controls), strengthening government response capacity, increasing funding for AI safety research, creating a federal AI regulatory agency (FAISA), and establishing international multilateral treaties.

- Q: How does the Gladstone report suggest regulating AI compute power?

- A: The report suggests tying hardware export licenses to on-chip monitoring technologies and mandating that AI companies obtain government permission to train and deploy any new models that require computing power above a certain threshold.

Sources:

- Time: AI Poses Extinction-Level Risk, State-Funded Report Says

- Gladstone AI: Defense in Depth: An Action Plan to Increase the Safety and Security of Advanced AI

- CNN: State Department Report Warns of AI Apocalypse

- Reuters: Biden issues executive order on safe, secure, and trustworthy AI

Sseema Giill

Sseema Giill

Trending Now! in last 24hrs

Trending Now! in last 24hrs